Does It Matter That AI Bots Sound Human?

Talking to machines can change us, even if it doesn't change them

Do you ever get a bad feeling about something but can't quite put your finger on why? That's how I feel this week about the trend to intentionally design AI to sound like it’s human. This seems like a bad idea to me, especially when it comes to education and to our kids. Despite my doubts, I'm trying to keep an open mind and question my questions. Thanks for entering in with me. As always, I'd love to hear your thoughts in the comments.

As always, these essays are free and publicly available without a paywall. If you can, please consider supporting my writing by becoming a patron via a paid subscription.

Technology is Not Neutral

If I had to choose just one myth about technology to bust, there's no doubt in my mind what it would be: technology is not neutral. Our tools are embedded with values and intentions that influence us as we use them. Whenever our technology does something for us it also does something to us.

This idea feels particularly relevant in the midst of our discussion about AI chatbots. It's one thing to think about how our digital devices and Internet technologies like social media shape us. These things are not all bad, but let's face it, there are some ways that they are pretty toxic. At the very least, it seems wise that we should approach them with intention. If we don't, the technology will be happy to steer us in particular directions and there are no guarantees that those directions are aligned with our good. Much more likely they are aligned with someone else's profit.

As we think about AI, the choices we make about our interface to it matters. There are important conversations to be had about the underlying technology itself around issues like the factual accuracy of the machine learning models and the quality and composition of the training data. But while these fundamental issues are important, it would be a mistake to focus solely on these technical details while we ignore the aspects closer to the user.

My main concern is the danger of building AI tools designed to mimic or emulate humans. I don't think this is going to lead us in a good direction. The applications in question span the gamut from chatbot therapists to deepfake voices to life-like computer animations.

Granted, this is not altogether new territory. We've been living in this world for a while, at least since the invention of ELIZA in the 1960s. In recent years, we've seen this sort of thing crop up in connection to language models several times. Some notable examples include Google engineer Blake Lemoine who became convinced that Google's LaMDA model was sentient. More recently, Kevin Roose had an uncanny experience with Microsoft's GPT-powered Bing chatbot where it tried to convince him that it loved him.

In the years since these incidents, developers have tried to put guardrails on their AIs to prevent these sorts of episodes, but even if the chatbots have been restrained from telling us that they're conscious, we're still designing them in such a way that they seem human-like. It's an intentional decision. Is it a wise one?

In the broad landscape of AI tools, there is a massive amount of money and energy pouring into these sorts of applications. They're not all magical silicon amulets that will listen to your conversations and text you but they often interact with you in a similar way. Whether it's an AI therapist, coach, or even just a standard LLM interface like ChatGPT, Claude, or Pi, they generally respond in the way that you'd hope a helpful friend might—with a cheery, energetic tone designed to make you think that they're there to help.

But how is this assumed persona shaping us? There's perhaps no one better to address that question than MIT professor Dr. Sherry Turkle.

The design shapes who we become

Sherry has long been near the top of my list of thoughtful voices writing about the influence technology has on us. In her 2012 book Alone Together she explored how technology reshapes our relationships. Then three years later, in 2015, she published Reclaiming Conversation. There, she lays out a case for the power of conversation as an antidote for the irony of feeling alone in a world full of connections.

In recent years, she's turned her attention to AI. In a podcast conversation I listened to this week she shares some of the questions that she’s been wrestling with now as she writes her next book which is scheduled to come out next year, Artificial Intimacy: Who Do We Become When We Talk to Machines? In this current work exploring AI, she returns again and again to the fundamentally artificial nature of AI. AI is pretending. Does it matter? In the interview Sherry wonders:

You know, I'm surrounded by developers who say, let's make these as human as we possibly can. They see artificial intimacy as a training ground for intimacy with people. And I think that that is not accurate. I think artificial intimacy is a training ground for connection with something that only has pretend empathy to give. And the danger is that if you do that long enough, pretend empathy starts to feel like empathy enough, kind of sufficient unto the day. And I think that's a problem.

Sherry doesn't dive into the impact of AI chatbots in educational spaces in her interview, but it's not hard to map many of her arguments over to that space. We want our students to build empathy and the ability to collaborate. How does collaborating with an AI, however empathetic it seems, develop these skills?

Some see the never-tiring, always-sunny mood of the AI to be an unmitigated good. But that misses the fact that these interactions are training students for a type of world that is not real. The real world, full of humans, is much more complex. We have bad days, we're influenced by our physical health, by the way we slept last night. How might swapping our complicated interactions with humans for frictionless interactions with machines—which do our bidding without complaint—shape us?

Consider Khanmigo, Khan Academy's friendly AI chatbot. I ponied up $4 over the weekend to give it a test drive. It's got a cute robot icon and is very cheerful. One thing you'll notice is that Khanmigo likes exclamation points! Like a lot! The first response in every chat back to me is happy! Another thing you'll notice is that it talks like it's a person. It says "I'm still experimental" and "I'm getting better every day" and "You shouldn't rely only on what I say." It is clearly designed to sound like there's somebody home. There isn't. Perhaps you and I can tell, but I'd be willing to bet that the students it's designed for might find themselves feeling much like Blake Lemoine.

Perhaps this isn't a big deal. Maybe it doesn't matter whether a chatbot is tricking us (and our children) into thinking it's human-like. But I'll admit I have my doubts.

The scenarios are similar to those that Dr. Turkle is observing as she talks with people who are using AI to write their love letters and help them process their emotions. Surely LLMs can be a helpful tool to reformat and restructure information so that we can interact with content more easily. But does this sort of interface where we send queries back and forth require that we make the robot on the other end of the line sound like and pretend to have aspects of personhood?

I don't think that building technologies that trick us into thinking they are human is a good move. They might sound like us, but large language models are a far cry from an actual human. Pretending to love, care, empathize, support, encourage, or relate is not the same as actually doing those things. Just like the smartphone and social media capitalize on engagement via outrage, these human-sounding AI applications are leveraging our desire for connection and empathy. It's one thing to release these sorts of technologies to adults, although I still don't think it's in the interest of our flourishing. It's a whole different ballgame to put them in front of our kids.

There are lots of directions for fruitful applications that don't require this sort of interaction. LLMs to help you program more efficiently or to serve as an interface to information as a quasi search engine seem to be promising applications that don't require faking relationship. Perhaps this is a situation where can and ought should part ways?

We naturally anthropomorphize our tools, but the specific design of the tool and our interface to it has a lot of sway in how we interact with it. LLMs are already going to be naturally deceptive in this way because they are trained on the things we write. But we have a choice about how we design and apply this technology.

Why are we intentionally building these in a way that makes them seem human? I can imagine many harmful outcomes from this and not very many positive ones. We played with our brains when we built timelines curated to stoke the fire of our anger. Now we're unleashing AI-powered interfaces and devices designed to hijack our relational wiring.

I'll close with a quote from one of the thinkers that has loomed large in my imagination in recent years, Neil Postman. Here's a particularly cutting passage from Amusing Ourselves to Death.

[E]very technology has an inherent bias. It has within its physical form a predisposition toward being used in certain ways and not others. Only those who know nothing of the history of technology believe that a technology is entirely neutral. There is an old joke that mocks that naive belief. Thomas Edison, it goes, would have revealed his discovery of the electric light much sooner than he did except for the fact that every time he turned it on, he held it to his mouth and said, “Hello? Hello?”

The difference is that if Edison was to speak to an AI today, it would talk back. That seems like something worth considering.

Reading Recommendations

I highly recommend you listen to the whole interview with Sherry on TED Radio Hour. It’s very thought-provoking.

In another essay Sherry wrote for Crooked Timber last fall, she explores what she calls Silicon Valley Fairy Dust. One relevant quote to pull.

In real life, things go awry. We need to tolerate each other’s differences. Virtual reality is friction-free. The dissidents are removed from the system. People get used to that, and real life seems intimidating. Maybe that’s why so many internet pioneers are tempted by going to space or the metaverse. That sense of a clean slate. In real life, there is history.

This week,

writes about creating AI “twins” of professors. His conclusion, technology cannot cure loneliness.What have we witnessed in the past four decades? We’ve never been more connected than we are by technology and are never more isolated, lonely, and depressed because of it. Our students are struggling more now than before the pandemic to make relationships in the real world, come to class, and simply be present. Gone is the art of hanging out. Certain developers are here to present generative tools as mechanisms to improve how we communicate.

The always insightful

writes his eleven predictions of what AI does next. Here’s the part that had me saying “preach, brother!”The important things in life can’t be quantified and run on a computer—I’m talking about love, care, trust, friendship, compassion, responsibility, family ties, kindness, dedication, faith, hope, courage, humility, respect, and human decency.

AI can’t deliver those. It merely pretends. And the pretending might fool some people, but feels like an insult to those who know better.

If you really want these qualities—and not a bot playing a part—you will turn to genuine human beings. And in those cases where trade-offs must be made between these human intangibles, AI is not only unreliable, but actually dangerous.

The Book Nook

I finished Stuart Turton’s latest, The Last Murder at the End of the World yesterday. Still doesn’t come near The 7 1/2 Deaths of Evelyn Hardcastle in my book, but I expected that would be the case going in. Some interesting themes connected to robots and what it means to be human that reminded me of Permutation City.

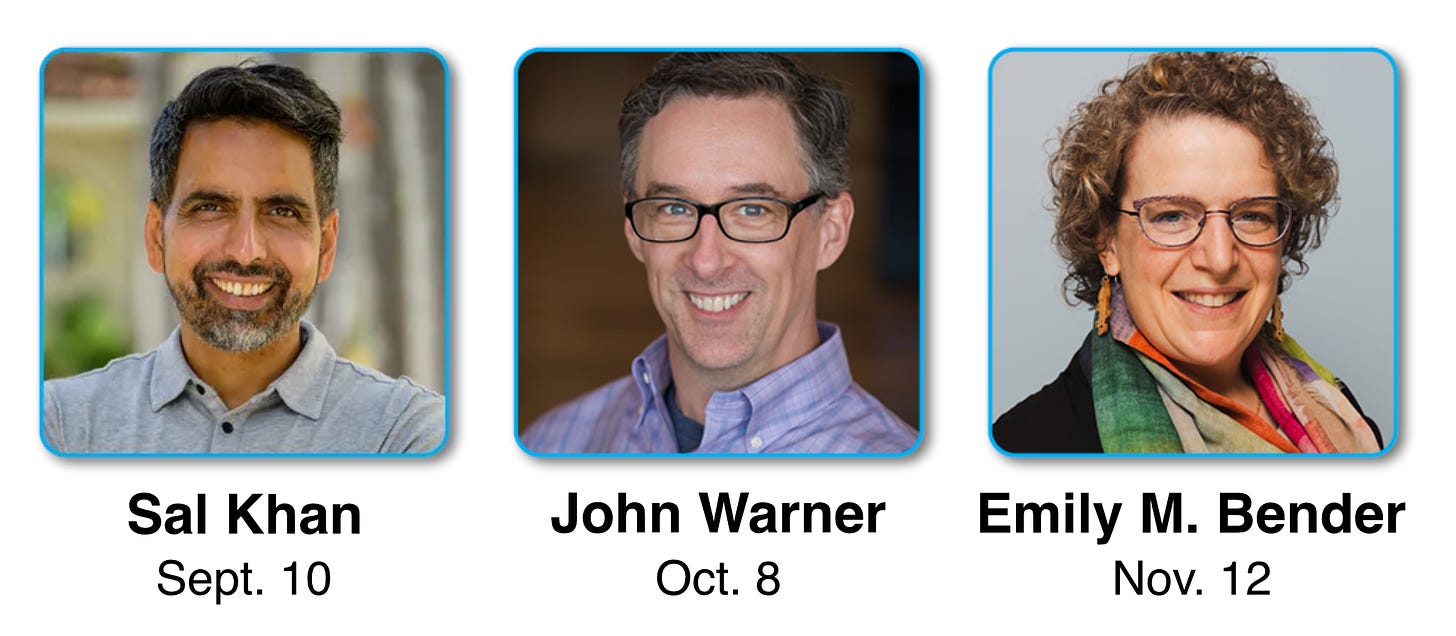

The Professor Is In

Tonight’s the night! Sal Khan will be on campus today at Harvey Mudd and interacting with our community. I’m pretty excited to get the chance to chat with him tonight on stage during the main event. Registration for in-person attendance is already way over capacity, but you’re welcome to join virtually on Zoom.

Leisure Line

It’s a bad day when the walk-in cooler at Costco goes down. It’s a particularly bad day when that happens on a Saturday morning.

Watching them haul eggs, milk, and all sorts of dairy through crowds of people gave me PTSD from my days hauling tubs of ice cream all across camp when our walk-in freezer went down in the snack shop I worked at in the summers during undergrad. But that’s a story for another day.

Still Life

It must be fall, the pumpkin-flavored everything is out. This weekend it was a pumpkin spice donut with cream cheese icing at Randy’s. So good.

This subject of AI Bots sounding like humans in education is totally scary! Children do not have the capability of discernment! They can easily be shaped and manipulated by this!

Dave? Dave?